If you’ve spent any time shooting or editing video, you already know one thing: video files get big, fast. Even short clips can balloon into massive files if they’re recorded at high resolutions, high frame rates, or with minimal compression. That’s why the world of digital video relies heavily on compression, the set of techniques used to make video files smaller while keeping them visually acceptable.

One major type of compression happens inside the CODEC (COmpressor/DECompressor). This is where your video file is actually packed down into something manageable. But even after a CODEC compresses a file, there’s another technique we can use to shrink files further, sometimes without a visually noticeable difference.

That technique is chroma subsampling.

And while some people casually call it “color compression,” that word can confuse beginners. Instead, think of chroma subsampling as prioritizing what the human eye sees best.

Before we understand why subsampling works, we need to talk about how digital video stores color.

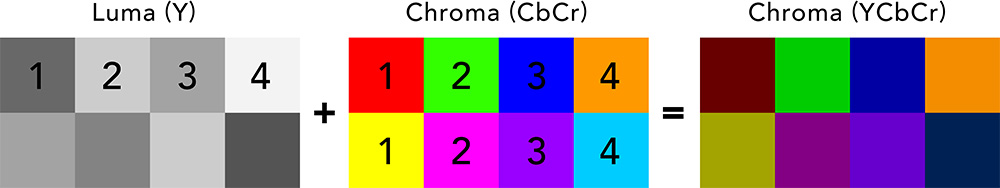

Most people assume video is stored in RGB (Red, Green, Blue). That’s what they see in Photoshop and in most displays. But digital video rarely stores pixels that way. Instead, it uses a color model called YCbCr, where:

Y = Luminance (brightness)

Cb = Blue-difference chroma

Cr = Red-difference chroma

This separation into brightness (Y) and color (C) channels is intentional. The human eye is far more sensitive to detail in brightness than to detail in color. We see sharp edges, textures, and patterns mostly through luminance, not chroma. This fact is the entire reason chroma subsampling works.

If luminance carries most of the important detail, and chrominance carries less, then we can reduce the amount of chrominance information without the viewer really noticing.

That’s chroma subsampling.

Chroma subsampling means:

We keep full detail for the brightness information but store less detail for the color information.

It’s written using a ratio like 4:4:4, 4:2:2, or 4:2:0.

Let’s back up for a second and start with an entire image. We need to zoom in to a block of 8 pixels to explain subsampling notation. Remember, color subsampling reduces color information at a very small scale (pixel level) so it is less noticeable in the final image.

[insert image]

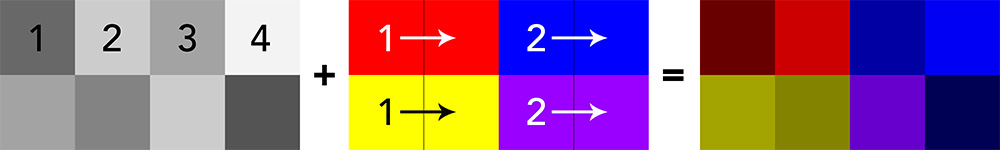

These three numbers describe how much luminance and chrominance information is preserved among a group of four pixels. The key to remember:

The first number is always 4. This means all four pixels retain full luminance (Y) information. Now, since we are working with 8-pixel blocks, it is assumed that this number 4 is also used in the second row, retaining full luminance information across the entire image.

From here, the next 2 numbers describe how much chroma detail is stored:

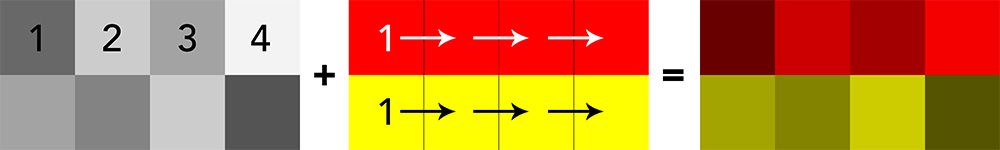

No chroma is reduced.

Every pixel has full color information.

This is the gold standard for acquisition, compositing, VFX, and color grading.

Con – Large files, lots of data.

Chroma resolution is cut in half horizontally.

Still extremely good for professional acquisition.

Common in broadcast-level cameras and formats like ProRes 422, DNxHR, and AVC-Intra.

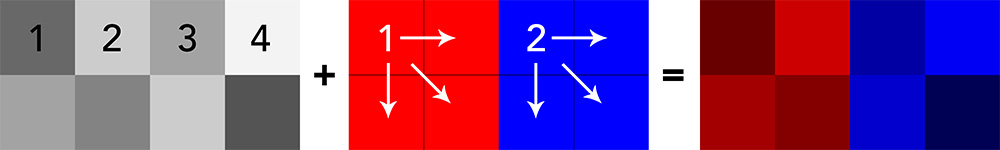

Chroma is reduced horizontally and vertically.

This is where the compromise becomes noticeable in heavy color grading or green-screen work.

Widely used in consumer cameras, DSLRs, mirrorless systems, phones, and many delivery formats (like H.264, AVCHD).

Also used in major broadcast standards (for delivery) such as 1080i.

4:2:0 is the most common subsampling format in the world, not because it’s great, but because it’s efficient.

You may also come across 4:1:1, though it’s much less common today than formats like 4:2:2 or 4:2:0.

In 4:1:1, luminance (brightness) is still fully preserved across all four pixels, but chroma (color) is sampled only once across those four pixels horizontally. That means color resolution is reduced even more than in 4:2:2.

4:1:1 = Full luminance, very limited horizontal color detail

This format was used in older digital video formats like DV (especially in NTSC regions), but it has largely been phased out in favor of more efficient and flexible options like 4:2:0.

When you encounter four numbers instead of three, the final “4” doesn’t refer to chroma at all.

It represents the alpha channel, the transparency information used in graphics, titles, overlays, and compositing.

So:

4:4:4:4 means

Full luminance + full chroma + full alpha.

This appears in certain high-end codecs that support transparency (e.g., ProRes 4444).

It doesn’t mean “extra color.” It means you’re storing information about what’s behind the image.

Chroma subsampling is just one piece of the larger color puzzle in video. To go deeper, it’s worth exploring:

Color space (Rec.709, Rec.2020, etc.)

Gamut (how many colors can be represented)

Bit depth (how many shades per channel)

Each of these plays a role in overall image quality and understanding them together gives you a much clearer picture of how digital video really works.